A Focus on Trust

A Focus

on Trust

Benjamin Kuipers ’70 is exceptionally well qualified to address such questions. Emeritus Professor of Electrical Engineering and Computer Science at the University of Michigan, he has been involved in AI research for more than 50 years, and has lived through numerous AI booms and busts. Questions of ethics have never been too far from his mind since his time at Swarthmore.

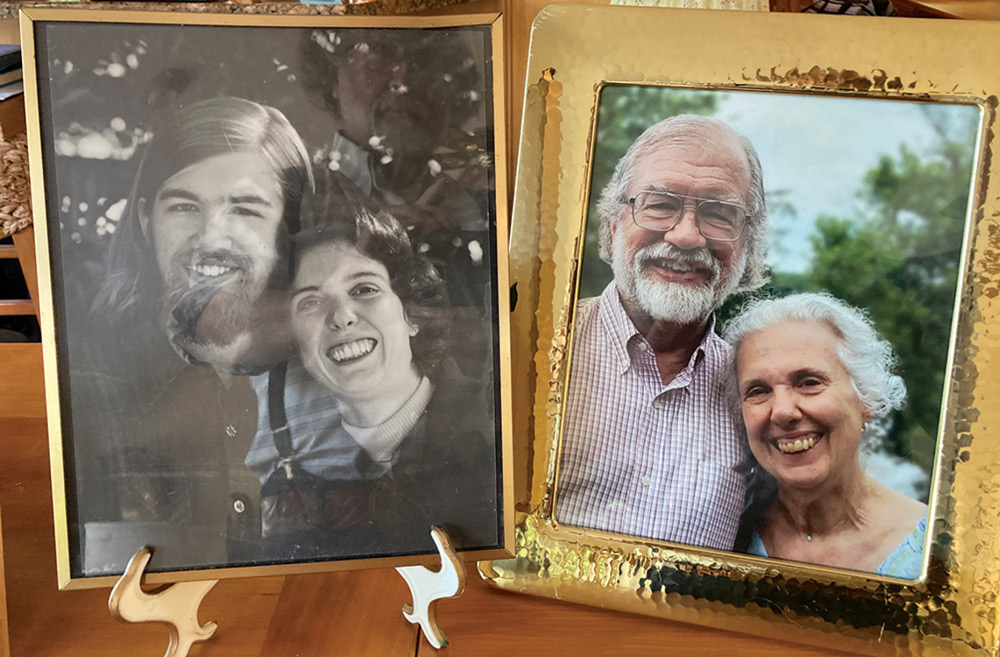

The draft board granted him conscientious objector status, but his own conscience continued to evolve. He had been brought up in the Christian Reformed Church. His mother had not wanted him to go to Swarthmore because she was afraid he would fall in love with a girl from outside their faith. He told her, “Mother! None of that is going to happen!” But, he adds, “Of course, she ended up being dead-on right.”

At Swarthmore he met his future wife, Laura Lein ’69. They married in 1975 and he joined the Society of Friends the same year. He was also in graduate school at MIT, where he was bitten by the AI bug. A math major at Swarthmore, he had always been interested in the question of whether the mind could be understood mathematically.

“I started hanging around the AI lab [at MIT], because my feeling is that AI is creating that mathematics,” he says. The branch of AI that called to him the most was common sense knowledge. How do you teach a computer the things that are so obvious we don’t even know how we know them?

Kuipers’ starting point, as a graduate student working with Marvin Minsky (“the ultimate hands-off advisor”), was to design a “cognitive map,” a computational representation of the space a robot is moving in, based solely on sensor data. He continued through a postdoctoral year, funded by the Defense Advanced Research Projects Agency in the Department of Defense, but then hit an ethical roadblock. He realized that his only prospective sponsors were agencies that wanted the technology to make cruise missiles. He resolved that, for the rest of his career, he would never accept funding from military sources.

Fortunately, within his chosen realm of common sense knowledge representation, there were plenty of other projects to work on. Kuipers enjoyed a long career at the University of Texas, where he mentored 32 Ph.D. students, and then at the University of Michigan, where he mentored five more. But as he approached retirement age, he began to explore another branch of the AI tree.

But how do you make decisions in a world with multiple intelligent agents — like computers or humans? He realized that this was what ethics was all about. Ethics are principles developed by society to guide us in our interactions with other people. Ethics has long been the domain of philosophers, but the rise of AI has forced computer scientists to confront such questions, too.

One way to transmit ethical knowledge is through rule-making, or deontology. Classic examples are the Ten Commandments and Isaac Asimov’s Three Laws of Robotics. But as even Asimov knew, rule-making can get you in trouble. Who decides on the rules? What happens when the rules conflict? What about exceptions?

A second path is virtue ethics, the approach of Aristotle and Confucius, who asked how a virtuous person behaves. Through personal experience and stories, a culture can pass along its values to future generations. AIs have proved to be phenomenally good at learning from a database of annotated examples (for example, classifying images from an image database). But creating an “ethics database” would be fraught with challenges. Who would do the annotations? And would they agree? It’s much harder to say whether action A is ethical in situation S than to say whether a photo contains an image of a cat.

A third approach, very attractive to computer scientists and capitalists alike, is utilitarianism (utilitarian ideas encourage actions that lead to the greatest good for the greatest number). Each agent strives to maximize its own utility function. Some economists, notably Milton Friedman, have argued that this is the only rational behavior. But utilitarianism can also lead to serious failures. One of them is the Prisoner’s Dilemma: a situation where two prisoners are charged with a crime. If one of them confesses and testifies against the other one, he gets out of jail and the other gets five years in jail. If they both confess, they both get three years in jail. If neither confesses — that is, they cooperate — then they go to jail for only one year. So cooperation is collectively the best course of action. But if each maximizes only their own individual utility, each is better off betraying the other, no matter what the other player does. So they both confess, and get the worst possible collective outcome.

The missing ingredient, Kuipers believes, is trust. Humans have evolved to be “super cooperators” — it’s our evolutionary advantage over the great apes — and trust makes cooperation possible. You have to be willing to accept a position of vulnerability, and the belief that the people you trust won’t take advantage of you. Also, to gain other people’s trust, it’s important to be able to signal your trustworthiness — and that is why it pays to behave ethically.

The utility function in the Prisoner’s Dilemma ignores this fundamental aspect of human behavior. “It is the model that is wrong, not the math,” Kuipers says. “If you provide the wrong model, you’re going to get the wrong output.” These three different schools of ethics, each with its own unconscious bias, remind Kuipers of the fable of the six blind men examining an elephant, each one drawing a flawed conclusion from feeling different parts of the elephant’s body. Surely the best way toward ethical AI is some combination of all three schools. “A focus on trust and cooperation,” he says, “is the key to figuring this out.”

new landscapes emerge:

AI researchers see risk and promise

a hidden danger

Recently, Open AI introduced the blander, more corporate-sounding Chat GPT-5 with the intention of installing guardrails against the distorting and disorienting relationships people developed with Chat GPT-4. But users raised a ruckus and the old version was revived.

Thomason argues in a recent Psychology Today article that there’s a hidden danger there, noting that people referred to Chat GPT-4 as their “sidekick” and “buddy.”

“People want an easy friend with none of the responsibility, work, and pushback,” Thomason says in a recent video interview, noting that people use “diminutive terms” for their AI “companion” because “they see themselves as the intrepid hero with the little robot who is at their beck and call all the time.”

Everything’s on demand and personalized in the digital age and social media, exacerbating our natural tendencies and the loneliness of modern society, she says. But while profit-driven algorithms that feed us continual dopamine hits have fueled this societal shift in social media and now AI, Thomason argues that the fault lies not just with our corporate overlords but also with ourselves.

“I can’t believe I have to convince people of this, but you have to take responsibility for your own mind,” she says.

“We don’t want any resistance or anything we have to negotiate, compromise, struggle with,” she says. “We don’t want to feel vulnerable. We want interactions where it’s all positive.”

But we’re missing something essential, says Thomason, author of Dancing With the Devil: Why Bad Feelings Make Life Good. (Oxford University Press, 2023).

“Bad feelings are human and necessary, and you have to be willing to open yourself up to the world in a way that doesn’t always meet exactly your needs,” she says, adding that being human is “something we’re supposed to live up to — there’s an ethical dimension to it where you have to work and try to be better.”

She felt the guardrails of Chat GPT-5 were healthy because they reminded people “it’s not your buddy, it’s just a computer program.” But the backlash and capitulation leaves her pessimistic.

“Frederick Douglass risked his life to learn how to read and now people can’t be bothered to read a five-minute article and ask Chat GPT,” she says. “That’s a massive unthinking betrayal of what it means to be a person in the world.

“This could be a moment where we could ask ourselves, ‘Is this who we want to be?’ But I feel that’s a question we’re not really asking.”

Letting Loose in Smallville

Letting Loose in Smallville

One recurring limitation of computer gaming is that the simulated worlds are often populated with robotic non-player characters, or NPCs, for human game players to interact with. NPCs usually obey a script and repeat basic actions that are programmed into them, making them flat and predictable.

Park led a team of researchers who used AI to create NPCs that have autonomy and personality traits that set them apart, apparently for the first time. The AI innovations could make games more immersive and engaging.

For their project, Park and the team created 25 AI-based NPCs that interact autonomously among themselves in Smallville. Each NPC starts as a one-paragraph biography. “It’s basically a natural language description along the lines of, ‘Isabella likes to help people and wants them to feel welcome,’” says Park.

Next, the team created what Park calls the agent architecture for the NPCs, which is essentially a memory bank that holds all background information the character needs to operate within the virtual village. “This is the data set that a character would pull from to help it decide on its next behavior within the simulation,” Park explains. “For instance, if a character had a croissant for breakfast the last three days, when he shows up at the Hobbs Cafe, it would use that information to generate an action. It’s like Google pulling up the most relevant information based on your question or search.”

The final layer of the character is the generative AI model. This model takes the information supplied by the agent architecture and executes a behavior for the character. “Since this character likes pastries for breakfast, when the character shows up at Hobbs Cafe, the model would use that information when ordering,” Park says. “It might order a pastry again, or change things up and get an egg sandwich.”

When the team let the 25 NPCs loose in Smallville, the results surprised Park.

“There were a couple events that could not have been foreseen based on the data,” he says. For example, the day of the simulation is Feb. 13, and Isabella decides on her own to throw a Valentine’s Day party and send invitations to the other friends for the event. “We had no idea anything like that was going to happen.”

Teaching Robots to Predict

Teaching Robots to Predict

Giving AI that foresight is no mean feat. The world is messy and unpredictable. It’s full of people and objects that don’t follow patterns. Rhinehart’s work focuses on teaching machines to do what humans do constantly: imagining what might happen next and adjusting accordingly.

An assistant professor at the University of Toronto Robotics Institute, Rhinehart leads a lab focused on “model-based” methods — systems that learn to forecast what might happen next — and “reward learning” — the art of teaching robots what people want them to do. Together, these strands let robots anticipate the future, act safely, and improve over time.

“We want robots to combine learning to forecast the future with reward learning, so they can plan ahead, do what people actually want, and get better with experience,” says Rhinehart.

In his first year at Swarthmore, Rhinehart registered for an intro to computer science class on a whim. He later became hooked on the idea that machine learning could help solve meaningful problems.

Outside of academia, he has worked at the cutting edge of the self-driving car industry. Before joining the University of Toronto, Rhinehart spent 18 months as a research scientist at Waymo, the self-driving car company that operates its driverless taxis in seven U.S. cities. “I’m very proud of the impact I had,” he says.

Rhinehart is still focused on giving robots a clearer picture of human behavior. By being able to understand us, he says, they can help keep us safe.

“I’m excited by the possibility that people could benefit from more capable automation,” he says. “Whether that’s through robots that take on useful tasks, or AI that accelerates science.”

a bespoke APP

If you are a biotech researcher keeping up on the latest advances in your specific field, says Vigoda, you could connect the app to the journals you follow, and prompt the AI to fill your feed with exactly the kind of information you want.

Sagolla and Vigoda forged a natural friendship at Swarthmore, cemented in part during live performances on WSRN. “The show was on Fridays at noon, and it was called Keeps the Doctor Away,” says Sagolla, who is CEO of Gray Whale. “We’d take the speakers, face them toward Parrish Beach, and play the blues for an hour. It was a signal that the weekend was starting.”

Sagolla, a veteran Silicon Valley presence, co-conceived Twitter and made the original pitch that had his team create the platform. Vigoda worked on early back-propagation algorithm developments that formed the backbone of much of today’s artificial intelligence and created the first microchips for deep learning (tensor processing units). He is currently CEO and chief scientist of Product Genius and is a strategic advisor for Gray Whale.

“Ben and I have been in both parallel and overlapping domains for a long time,” says Sagolla.

As Vigoda explains, online algorithms used by major tech platforms are built and controlled by the company, and are designed to benefit the platform’s owner.

“All the information is in one system, and we don’t know how it is deciding what to show us,” Sagolla says. “The big vision here is to let people create their own feeds and their own algorithm, which they can do because the prompts are clearly written.”

Gray Whale has invited users and developers to access their AI playground to create their own bespoke app. The AI within the new app in turn reads and learns from user behavior — text searches, scrolling, and cursor movement — to deliver user-friendly results.

“Now that we have this new way of sharing and consuming information in individualized feeds, we are really interested in how to design the software and the corporate governance for positive societal impact,” Vigoda says. “We want to reach out to those who may have ideas or be interested in collaborating.”